LLM Governance Explained

The Rules, Risks & Architecture Every PM Must Know

There is a new product layer emerging in AI teams.

It isn’t visible in UI mocks.

It isn’t inside Jira tickets.

It isn’t even written in most product strategy docs.

Yet it decides whether an AI product becomes:

a stable revenue engine

or a hallucinating liability.

That layer is LLM Governance.

And today, almost nobody talks about it.

PMs talk about prompts.

Engineers talk about pipelines.

Researchers talk about model sizes.

Founders talk about the next demo.

Meanwhile, the single most important layer needed to keep AI products reliable, safe, aligned, consistent, and legally defensible… sits unowned.

If 2024 was the year companies learned how to ship AI features,

2026 is the year companies learn how to govern them.

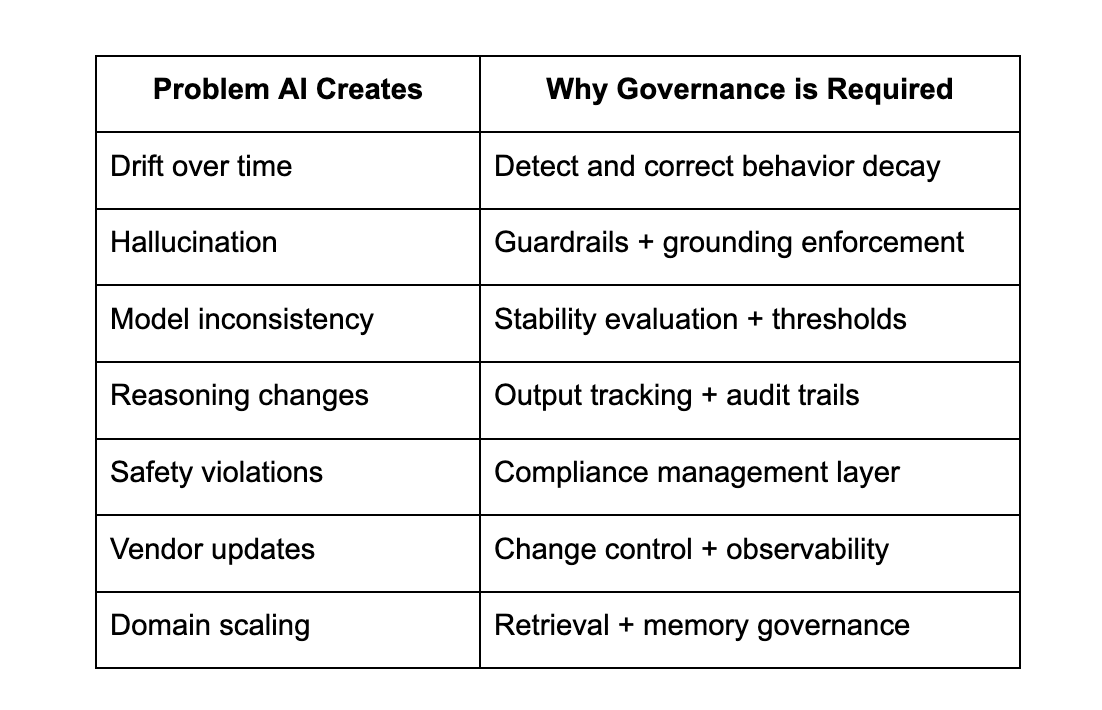

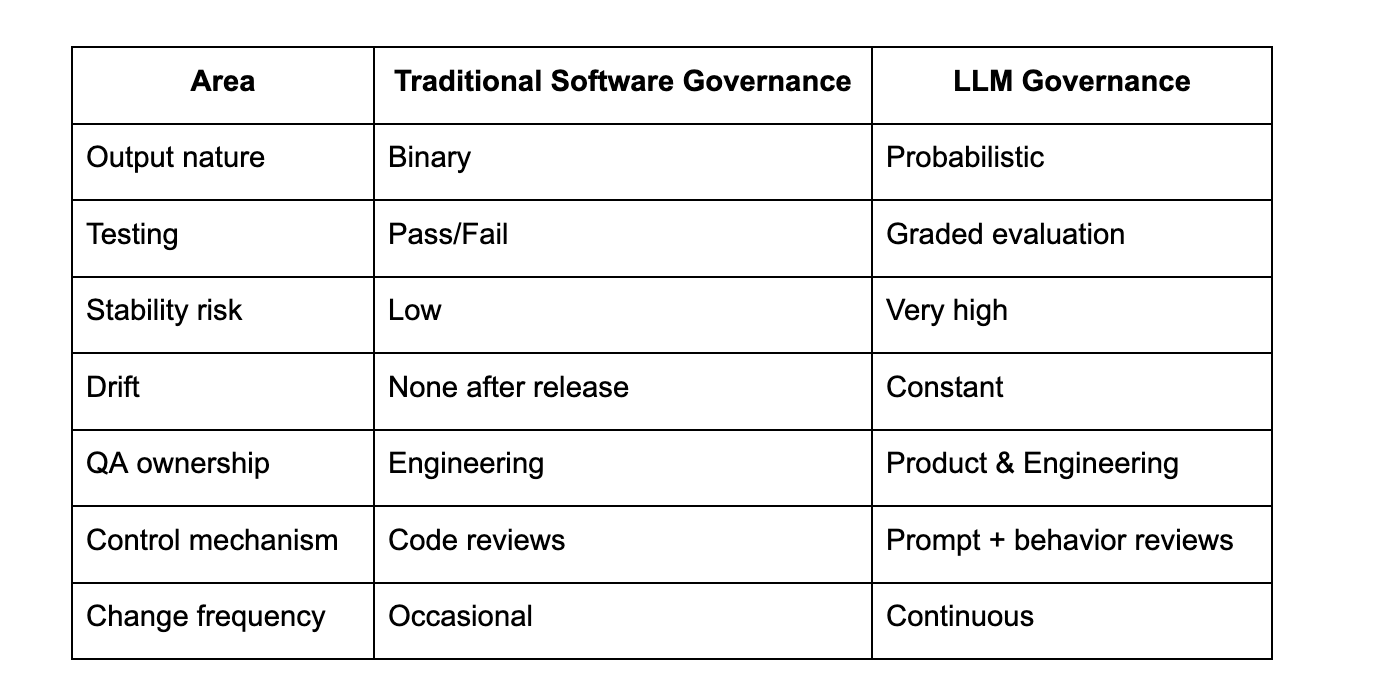

The Uncomfortable Truth: AI Doesn’t Stay the Same After Release

Traditional software stays still unless new code is deployed.

LLMs do not. They change simply by being used.

Why? Because they evolve through:

model parameter updates

temperature shifts

data expansion

context drift

prompt decay

hardware latency changes

vendor updates

new model versions

user domain variation

This is why governance matters.

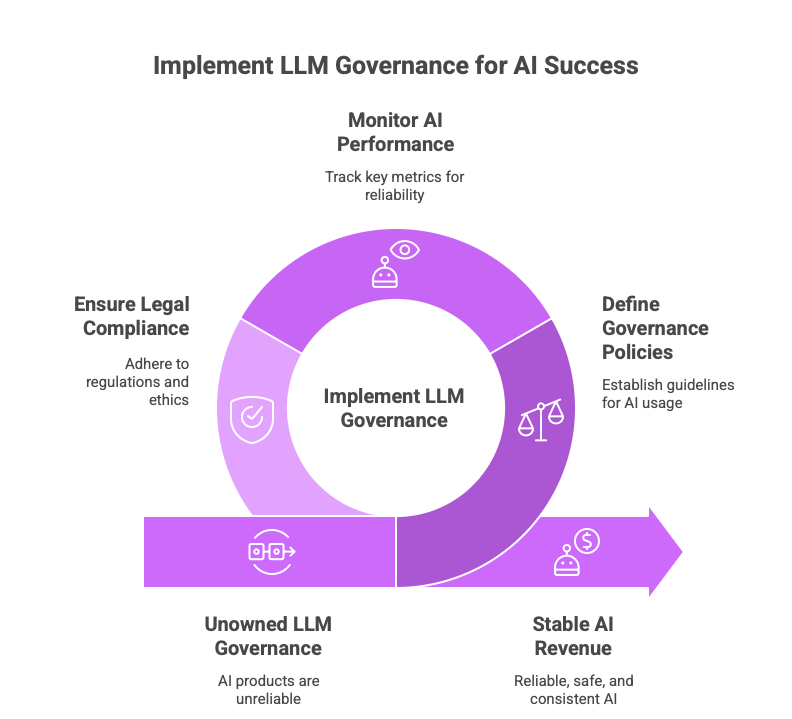

What Is LLM Governance?

LLM Governance is the discipline of controlling, monitoring, auditing, stabilizing, and validating model behavior across its lifecycle.

It is how product teams ensure AI outputs stay:

accurate

consistent

safe

compliant

explainable

traceable

and scalable

Why PMs Must Care: AI Behavior Is Product

Outputs are not binary anymore.

LLM answers sit on a probability curve.

A PM who can’t measure behavior

cannot own product quality.

Why LLM Governance Exists

Why Governance Is the New Moat (Not Model Size)

The market myth:

“Bigger models = better product.”

Not true anymore.

Everyone uses the same LLM providers.

The differentiation layer becomes governance.

Two teams using the same model will produce different outcomes based on:

retrieval accuracy

evaluation design

prompt architecture

monitoring discipline

guardrail strength

audit control

Governance is where superiority compounds.

The Four Pillars of LLM Governance (PM Perspective)

1 Behavioral Stability

Ensuring outputs remain consistent across:

time

domains

model updates

user segments

traffic load

Stability = user trust.

2 Safety & Compliance

Prevent:

misinformation

IP misuse

bias

toxicity

illegal advice

confidential leakage

Compliance is shifting left - into product, not legal.

3 Observability & Drift Monitoring

Traditional software logs don’t detect reasoning drift.

Governance requires:

hallucination scoring

quality benchmarks

output metrics

latency & cost tracing

4 Auditability & Control

Outputs must be traceable:

What dataset?

Which knowledge source?

Who changed the prompt?

What version of the model?

Traceability is protection.

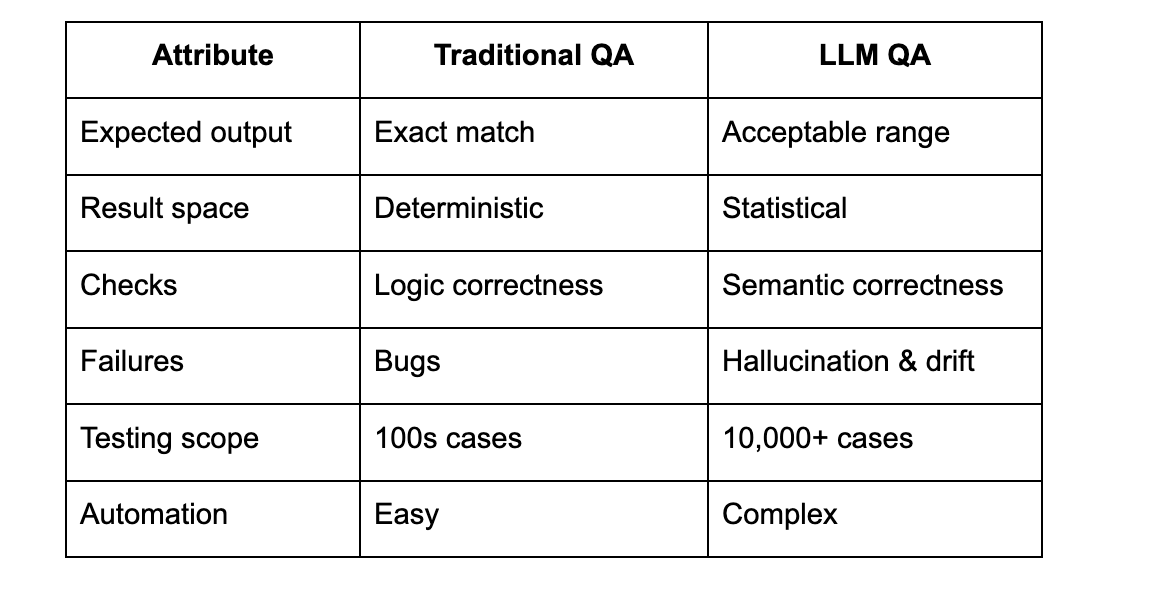

LLM Governance vs Traditional Governance

Why Traditional QA Cannot Govern AI

Standard QA checks binary outputs:

pass / fail.

LLM QA measures behavior change over time:

tone

structure

reasoning

hallucination

refusal pattern

Traditional QA vs LLM QA

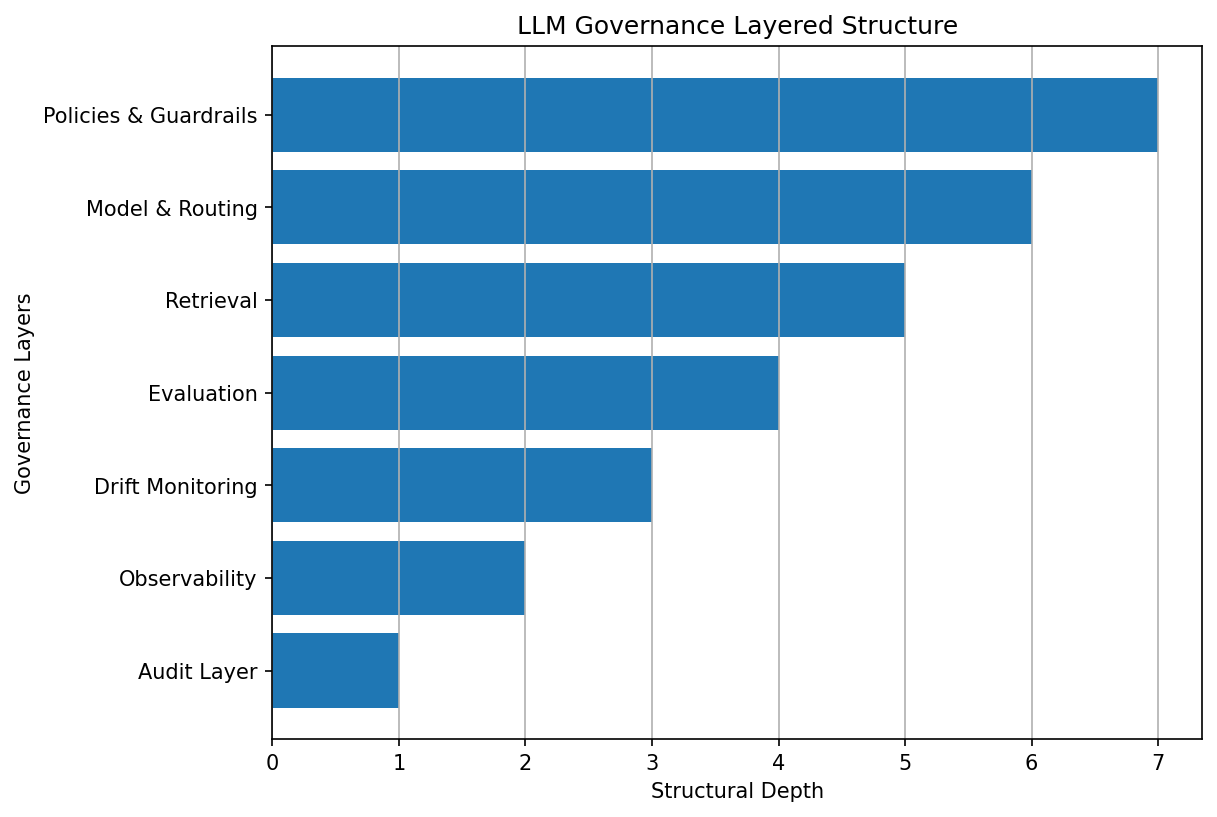

The Architecture of LLM Governance (Product Layer)

Users

↓

Policies (rules, safety, compliance)

↓

Guardrails (boundaries + filters)

↓

Prompt/Model Routing (selection logic)

↓

LLM (model execution)

↓

Retrieval Layer (grounding data)

↓

Evaluation Pipeline (scoring + grading)

↓

Drift Monitoring Layer (behavior tracking)

↓

Observability Layer (metrics + alerts)

↓

Audit Store (traceable logs)

This is the new stack PMs must design.

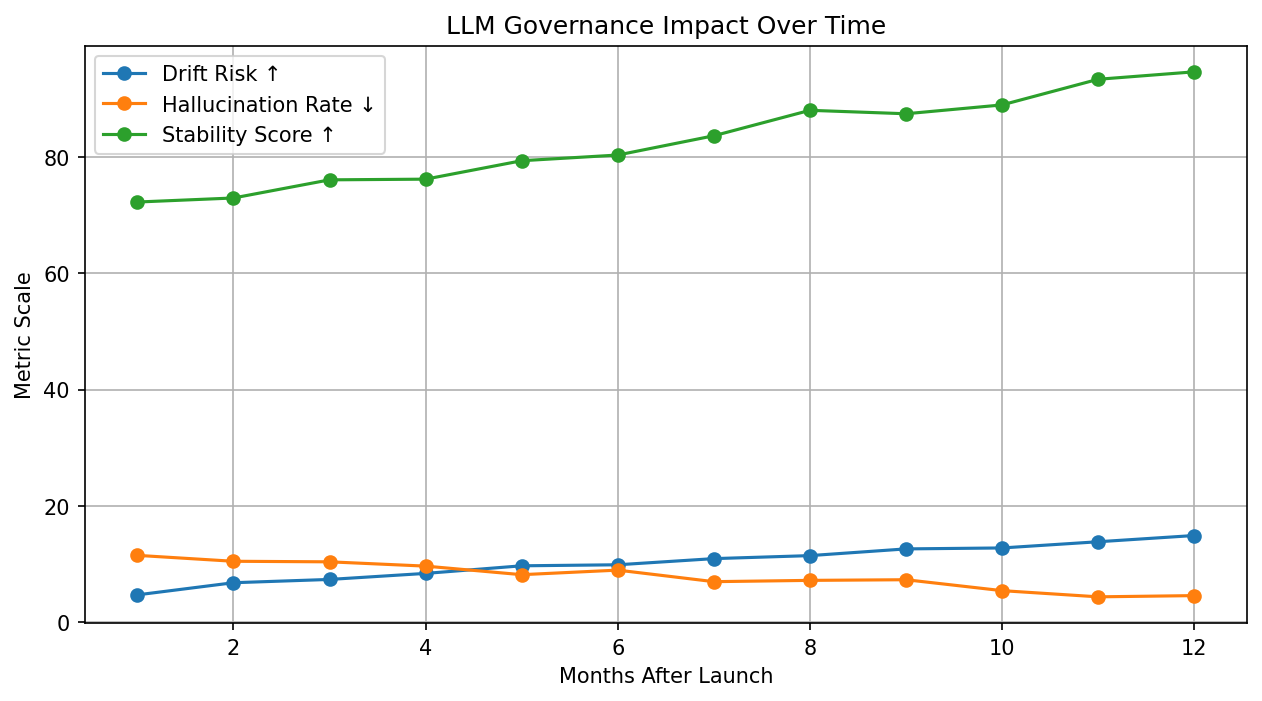

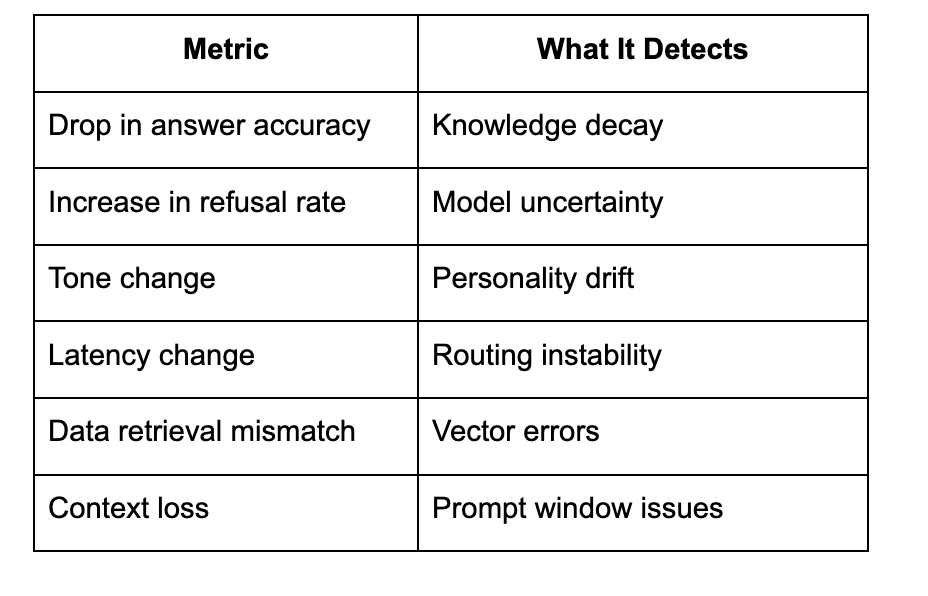

Why Drift Is the Silent AI Killer

Drift is not a failure event.

It is a decay process.

Outputs worsen slowly until they collapse fast.

Drift Indicators to Monitor

Case Example: Governance Failure

A fintech deployed an LLM assistant to answer customer queries.

The pilot passed every test.

Six months later:

hallucination up 173%

tone changed

accuracy dropped

regulatory violations detected

Nothing changed in code.

Nothing changed in prompts.

Only behavior changed.

Why?

No governance.

No evaluation refresh.

No routing logic review.

No drift analysis.

No retrieval audit.

No safety renewal.

When governance is missing,

systems collapse quietly.

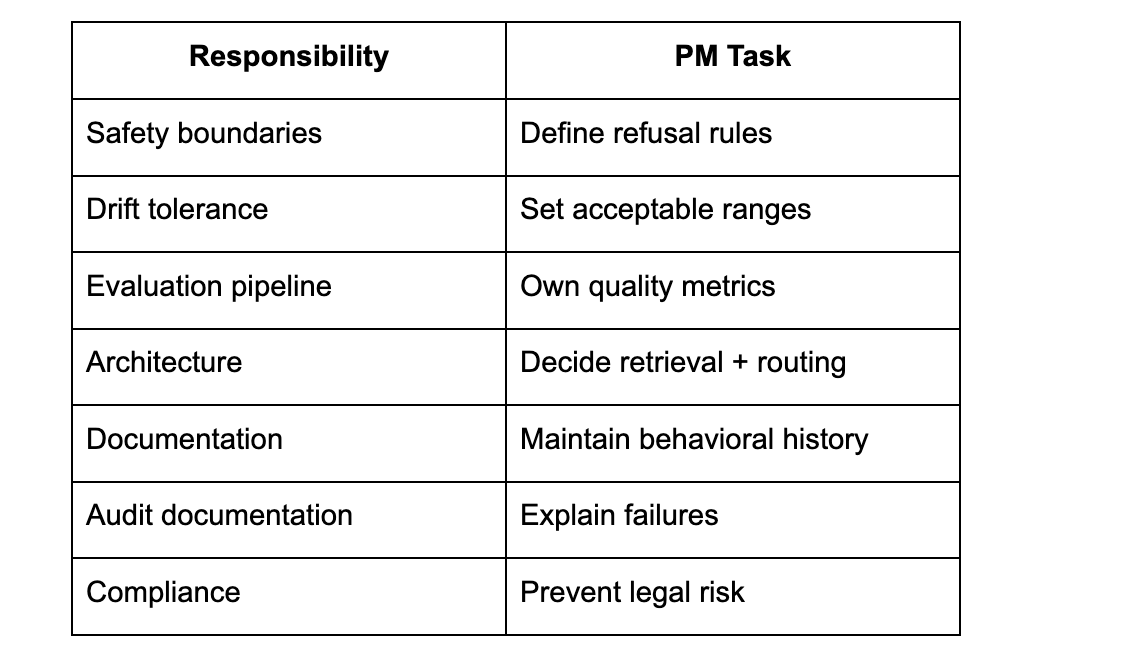

Why PMs Must Own Governance (Not Engineers)

Because PMs own:

quality

product outcome

customer experience

compliance risk

roadmap continuity

Governance isn’t low-level execution.

It is the product direction.

Hiring Will Shift Around Governance

Future AI PM interviews will ask:

“How would you monitor model drift?”

“What is an acceptable hallucination threshold?”

“How do you guarantee retrieval integrity?”

“What does guardrail testing look like?”

Governance is becoming the skill filter.

PM Responsibilities in LLM Governance

AI products don’t fail because models are bad.

They fail because behavior isn’t governed.

LLM governance isn’t optional:

It is the foundation layer of enterprise AI.

PMs who master this:

own architecture

control product risk

lead AI orgs

and shape the next era of software

Everyone else will build demos.

Governors will build companies.