AI Features Aren’t Enough: The Shift to Owning AI Systems

The hidden responsibilities behind “AI-powered”

Most PMs today are building AI features.

Very few are owning AI systems.

That difference will define who stays relevant

and who slowly loses influence in AI-driven teams.

AI hasn’t changed what PMs ship.

It has changed what PMs are responsible for.

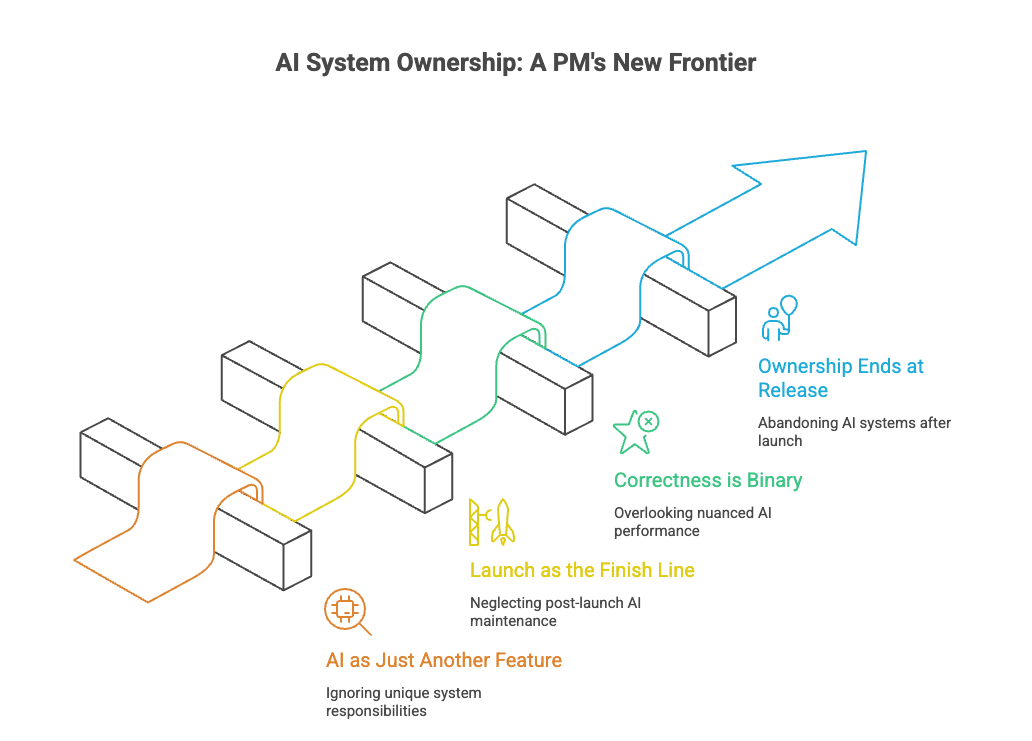

Yet most product organizations are still operating as if:

AI is just another feature

launch is the finish line

correctness is binary

ownership ends at release

That mental model no longer works.

This post explains the critical difference between building AI features and owning AI systems, why most PMs are stuck on the wrong side of it, and what true AI system ownership actually looks like.

Why This Distinction Matters Now

In traditional software:

features were discrete

behavior was deterministic

failure was obvious

ownership boundaries were clean

In AI products:

behavior is probabilistic

outputs evolve over time

failures are subtle

responsibility is blurred

That means PMs can no longer optimize for:

“Did we ship the feature?”

They must optimize for:

“Does the system continue to behave correctly?”

That is not feature thinking.

That is system ownership.

What Building AI Features Looks Like

Most PMs today are doing this often unknowingly.

Feature-oriented AI PM behavior:

define an AI capability (summarize, recommend, chat)

write PRDs describing user flows

collaborate on prompt design

launch behind a feature flag

track adoption metrics

move on to the next roadmap item

This feels productive.

It looks like progress.

But it assumes AI behaves like software.

It doesn’t.

What Owning AI Systems Looks Like

System-oriented PMs think very differently.

They focus on:

behavior over time

failure modes

quality decay

human fallback paths

evaluation pipelines

governance boundaries

They don’t ask:

“Did we ship?”

They ask:

“What happens when this degrades?”

That single question changes everything.

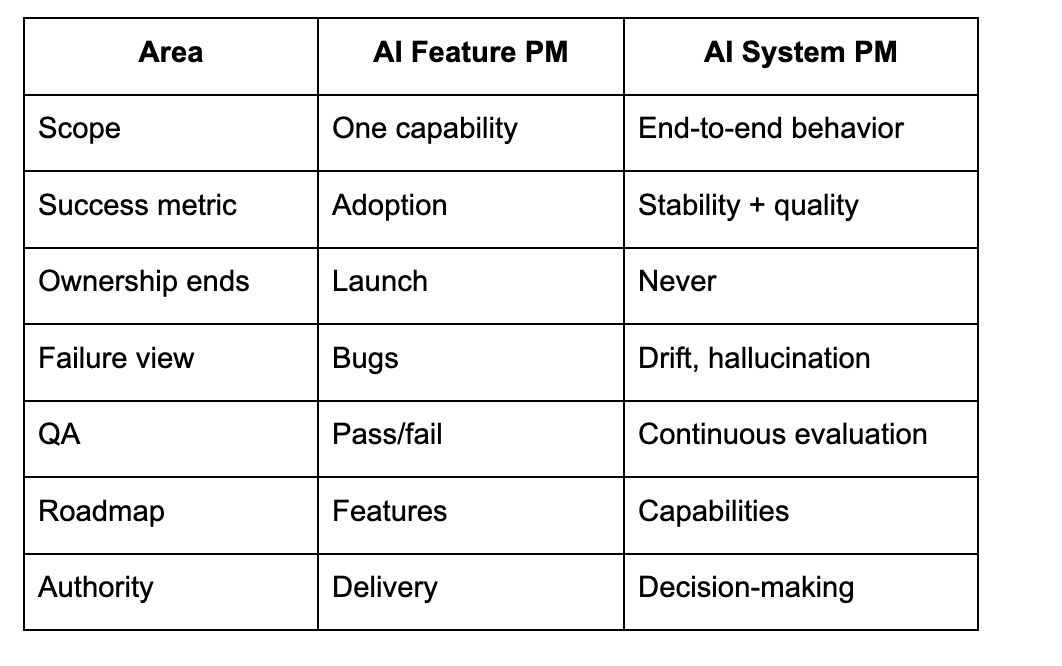

Feature PM vs System PM

This is not a maturity ladder.

It’s a mindset shift.

Why Feature Thinking Fails for AI

1. AI Does Not Stay the Same After Launch

Models drift.

Data changes.

User behavior evolves.

A feature mindset assumes stability.

AI guarantees instability.

2. AI Failures Are Behavioral, Not Binary

There is no “broken” state.

There is only:

less accurate

less grounded

more hallucination-prone

subtly wrong

Feature metrics don’t catch this.

3. Ownership Gets Diffused

When AI fails, teams ask:

Was it the model?

the prompt?

the data?

infra?

User misuse?

Without a system owner, no one truly owns the outcome.

That owner must be the PM.

The Core Shift: From Shipping to Stewardship

Owning AI systems means PMs move from:

planners - stewards

writers - operators

deliverers - governors

Their job becomes:

defining acceptable behavior

deciding error boundaries

monitoring degradation

triggering interventions

explaining failures to leadership

This work is ongoing.

It doesn’t fit neatly into sprints.

That’s why many PMs unconsciously avoid it.

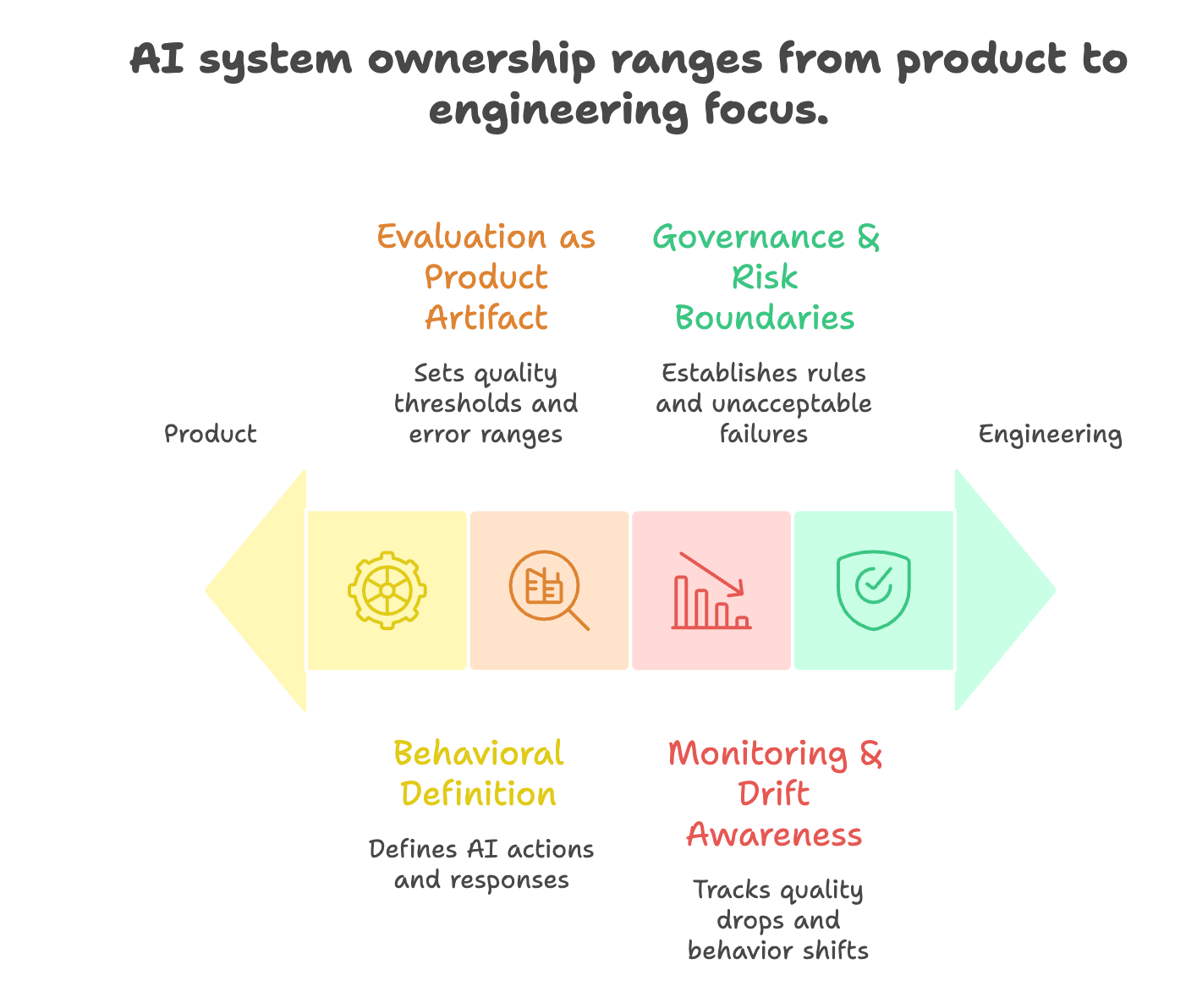

What AI System Ownership Actually Includes

Here is what system PMs explicitly own:

1 Behavioral Definition

Not just what the AI does, but:

when it should refuse

when it should escalate

when it should ask for clarification

when it should fall back

This is product work not engineering.

2 Evaluation as a Product Artifact

System PMs own:

evaluation criteria

quality thresholds

acceptable error ranges

trade-offs between accuracy, latency, and cost

They don’t treat evals as QA.

They treat evals as product truth.

3 Monitoring & Drift Awareness

Owning a system means knowing:

when quality drops

when behavior shifts

when confidence increases incorrectly

when outputs diverge from intent

If PMs don’t own this, no one does.

4 Governance & Risk Boundaries

AI systems need explicit rules:

what the system must never do

what data it can access

what outputs require review

what failures are unacceptable

This is product judgment, not compliance paperwork.

Why Many PMs Get Stuck at Feature Level

Three reasons:

Comfort with Traditional PM Skills

Roadmaps, PRDs, stakeholder alignment familiar territory.

System ownership requires:

technical literacy

probabilistic thinking

ambiguity tolerance

long-term accountability

Not all PMs are ready for that shift.

Organizational Incentives

Most orgs reward:

launches

visibility

delivery velocity

They don’t reward:

preventing failures

reducing drift

maintaining stability

System PMs often do invisible work until something breaks.

Fear of Responsibility

Owning systems means owning failures without clear blame.

Many PMs prefer shared responsibility.

AI systems punish that mindset.

How PMs Transition from Feature Builders to System Owners

This transition is learnable.

Step 1: Change the Question You Ask

Stop asking:

“What should we build next?”

Start asking:

“What could go wrong over time?”

Step 2: Own One System Metric

Pick one:

hallucination rate

eval score

fallback frequency

user correction rate

Make it your metric.

Step 3: Redefine the Roadmap

Replace feature items with:

improve grounding reliability

reduce error variance

add fallback paths

tighten behavior boundaries

This reframes how leadership sees AI work.

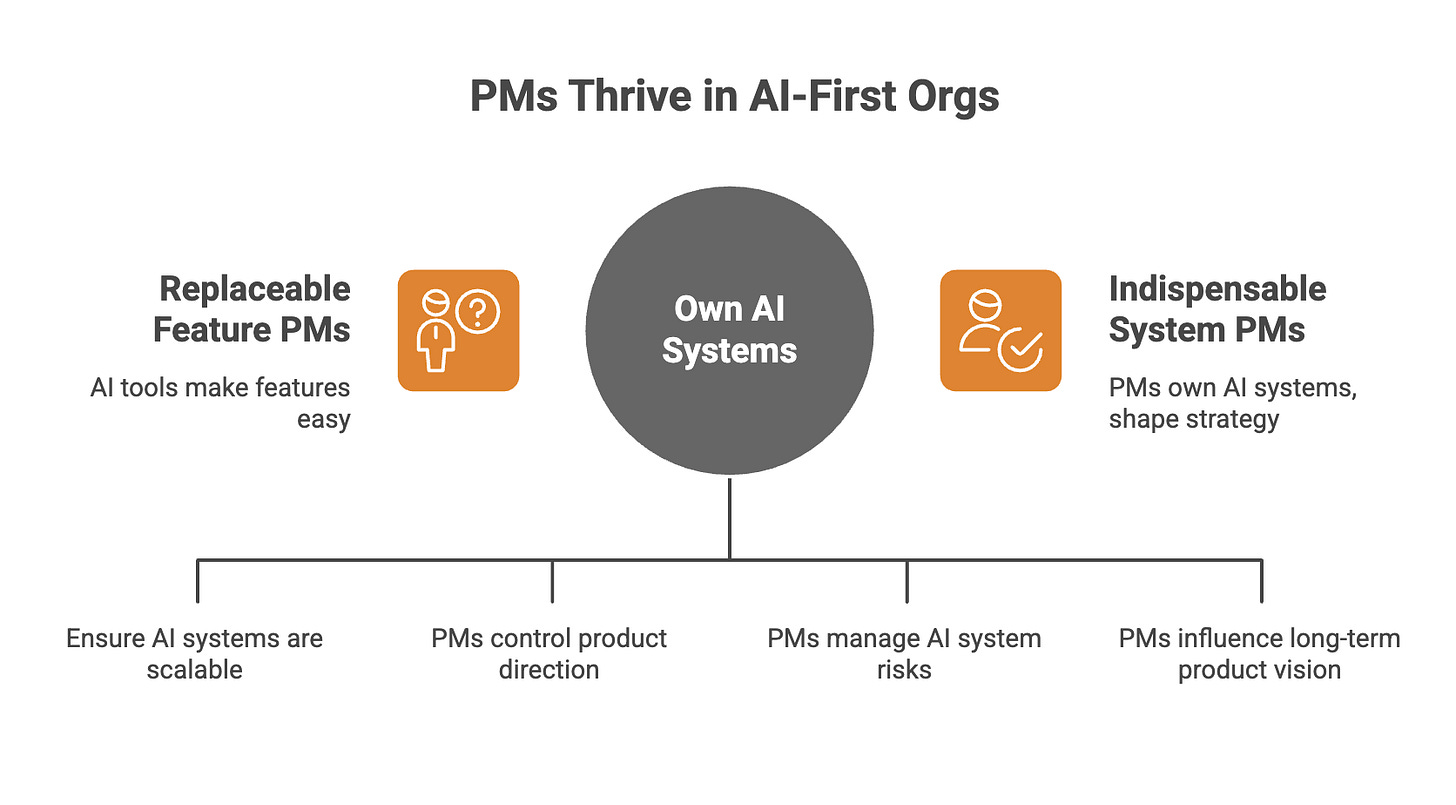

The Career Implication PMs Miss

In AI-first orgs:

feature PMs become replaceable

system PMs become indispensable

Why?

Because anyone can ship a feature with AI tools.

Very few people can keep AI systems reliable at scale.

That skill compounds.

Building AI features is table stakes.

Owning AI systems is the job.

PMs who stop at features will:

lose influence

get bypassed in decisions

be seen as coordinators

PMs who own systems will:

define product truth

control risk

shape strategy

earn long-term trust

AI didn’t eliminate the PM role.

It raised it.

But only for PMs willing to move

from shipping things

to owning behavior.

Didn't expect this take, but your distinction is spot on. How do large organisations actually foster this system ownership for their PMs? Brilliant insight.